TRECVID is a laboratory-style evaluation that attempts to model real world situations or significant component tasks involved in such situations. In 2006 TRECVID will complete a 2-year cycle on English, Arabic, and Chinese news video. There will be three system tasks and associated tests and one exploratory task. Participants must complete at least one of the following 4 tasks in order to attend the workshop:

- shot boundary determination

- high-level feature extraction

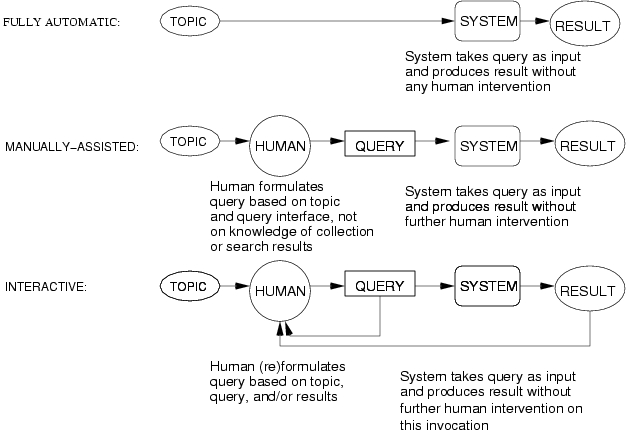

- search (interactive, manually-assisted, and/or fully automatic)

- rushes exploitation (exploratory)

For past participants, here are some changes to note:

- Although the amount of test data from sources used in 2006 will be as large or larger than in 2005, there will be significant additional data from channels and/or programs not included in the data for 2005. This is expected to provide interesting information on the extent to which detectors generalize

- Participants in the feature task will be required to submit results for all features from the common annotation data for 2005 listed below. A subset of 20 of those will be chosen by NIST and evaluated. This is intended to encourage generic methods for development of detectors.

- Additional effort will be made to ensure that interactive search runs come from experiments designed to allow comparison of runs within a site independent of the main effect of the human in the loop. This will be worked out with the participants before the guidelines are complete.

- Following the VACE III goals, topics asking for video of events will be much more frequent this year - exploring the limits of one-keyframe-per-shot approaches for this kind of topic and encouraging exploration beyond those limits.

- Most of the data will be distributed again on hard drives but at the suggestion of LDC the file system will be ReiserFS to help avoid the data corruption and access problems encountered in 2005

2.1 Shot boundary detection:

Shots are fundamental units of video, useful for higher-level processing. The task is as follows: identify the shot boundaries with their location and type (cut or gradual) in the given video clip(s)

2.2 High-level feature extraction:

Various high-level semantic features, concepts such as "Indoor/Outdoor", "People", "Speech" etc., occur frequently in video databases. The proposed task will contribute to work on a benchmark for evaluating the effectiveness of detection methods for semantic concepts

The task is as follows: given the feature test collection, the common shot boundary reference for the feature extraction test collection, and the list of feature definitions (see below), participants will return for each feature the list of at most 2000 shots from the test collection, ranked according to the highest possibility of detecting the presence of the feature. Each feature is assumed to be binary, i.e., it is either present or absent in the given reference shot.

All feature detection submissions will be made available to all participants for use in the search task - unless the submitter explicitly asks NIST before submission not to do this.

Description of high-level features to be detected:

The descriptions are those used in the common annotation effort. They are meant for humans, e.g., assessors/annotators creating truth data and system developers attempting to automate feature detection. They are not meant to indicate how automatic detection should be achieved.

If the feature is true for some frame (sequence) within the shot, then it is true for the shot; and vice versa. This is a simplification adopted for the benefits it affords in pooling of results and approximating the basis for calculating recall.

NOTE: In the following, "contains x" is short for "contains x to a degree sufficient for x to be recognizable as x to a human" . This means among other things that unless explicitly stated, partial visibility or audibility may suffice.

Selection of high-level features to be detected:

In 2006 participants in the high-level feature task must submit

results for all of the following features. NIST will then choose 10 of

the features and evaluate submissions for those. Use the following

numbers when submitting the features.

NOTE: NIST will instruct the assessors during the manual

evaluation of the feature task submissions as follows. The fact that a

segment contains video of physical objects representing the topic

target, such as photos, paintings, models, or toy versions of the

topic target, should NOT be grounds for judging the feature to be true

for the segment. Containing video of the target within video may be

grounds for doing so.

Search is high-level task which includes at least query-based

retrieval and browsing. The search task models that of an intelligence

analyst or analogous worker, who is looking for segments of video

containing persons, objects, events, locations, etc. of

interest. These persons, objects, etc may be peripheral or accidental

to the original subject of the video. The task is as follows: given

the search test collection, a multimedia statement of information need

(topic), and the common shot boundary reference for the search test

collection, return a ranked list of at most 1000 common reference

shots from the test collection, which best satisfy the need. Please

note the following restrictions for this task:

2.3 Search:

2.4 Rushes exploitation:

Rushes are the raw material (extra video, B-rolls footage) used to produce a video. 20 to 40 times as much material may be shot as actually becomes part of the finished product. Rushes usually have only natural sound. Actors are only sometimes present. So very little if any information is encoded in speech. Rushes contain many frames or sequences of frames that are highly repetitive, e.g., many takes of the same scene redone due to errors (e.g. an actor gets his lines wrong, a plane flies over, etc.), long segments in which the camera is fixed on a given scene or barely moving,etc. A significant part of the material might qualify as stock footage - reusable shots of people, objects, events, locations, etc. Rushes may share some characteristics with "ground reconnaissance" video.

Groups participating in this task will develop and demonstrate at least a basic toolkit for support of exploratory search on highly redundant rushes data. The toolkit should include the ability to ingest video and subject it to whatever analysis your subsequent toolkit activities will require. The toolkit need not be a complete, polished, fully interactive product yet.

The minimal required goals of the toolkit are the ability to:

- remove/hide redundancy of as many kinds as possible (i.e., summarize)

- organize/present non-redundant material according to at least 6

features. These features should be well-motivated from the point of

view of some user/task context and cannot all be of one type

(e.g. not all cinematographic or camera setting).

Here are some possible examples. We took some of the features that MediaMill had some success in detecting in the 2005 BBC rushes using models trained on broadcast news, some features noted as of interest to DTO in the VACE III BAA section on ground reconnaissance data, and some from the trecvid.rushes discussion.

- interview or not (note: interviews may appear as monologues)

- fixed camera or not

- indoor setting or not

- urban setting or not

- person present or not

- water body present or not

Groups may add addtional functionality as they are able.

Participants will be required to perform their own evaluation and present the results. No standard keyframes or shot boundaries will be provided as we would like to encourage innovation in approach.

The results of this exploratory task will provide input to planning for future TRECVID workshops about the feasiblity of shifting to work on unproduced video - both with respect to what systems can do and how able we are to evaluate them. Both involve difficult research issues.

3. Video data:

A number of MPEG-1 datasets are available for use in TRECVID 2005. We describe them here and then indicate below which data will be used for development versus test for each task.

Television news from November 2004

The Linguistic Data Consortium (LDC) collected the following video material and secured rights for research use. Note that although the amount of test data from sources used in 2006 will be as large or larger than in 2005, there will be significant additional data from channels and/or programs not included in the data for 2005. This is expected to provide interesting information on the extent to which detectors generalize.

TRECVID-use Lang Source Program Hours

tv5 tv6 Eng NBC NIGHTLYNEWS 9.0

tv5 tv6 Eng CNN LIVEFROM 14.5

tv6 Eng CNN COOPER 8.3

tv6 Eng MSN NEWSLIVE 14.5

------------------------------------------------------ 46.3

tv5 tv6 Chi CCTV4 DAILY_NEWS 9.2

tv6 Chi PHOENIX GOODMORNCN 7.5

tv6 Chi NTDTV ECONFRNT 7.8

tv6 Chi NTDTV FOCUSINT 5.2

------------------------------------------------------ 29.7

tv5 tv6 Ara LBC LBCNAHAR 35.3

tv5 tv6 Ara LBC LBCNEWS 39.5

tv6 Ara ALH HURRA_NEWS 7.8

------------------------------------------------------ 82.6

-----

158.6 hours

(136,026,556,416 bytes)

Rushes exploitation

About 50 hours of rushes has been provided by the BBC Archive.

3.1 Development versus test data

Groups that participated in 2005 should already have the 2005 video and common annotation on the 2005 development data as training data and will not get a new copy. New participants may request a copy of that data for training. It is expected that additional annotation of the 2005 development data will be donated by the LSCOM workshop for a set of 500 or more features on the 2005 development data, by the MediaMill team at the University of Amsterdam for 101 features as part of their larger proposal for donation of common discriminative baseline, and by the Centre for Digital Video Processing at Dublin City University for MPEG-7 XM features.

A random sample of about 7.5 hours will be removed from the 2006 television news data and the resulting data set used as shot boundary test data. The remaining hours of television news will be used as the test data for the search and high-level feature tasks. The partitioning of the rushes will be decided as part of the rushes task definition.

3.2 Data distribution

The shot boundary test data will be express shipped by NIST to participants on DVDs (DVD+R).

Distribution of all other development data and the remaining test data will be handled by LDC using 250GB loaner IDE drives using the ReiserFS. These must be returned or purchased within 3 weeks of loading on your system unless you have gotten approval for a delay from LDC in advance. The only charge to participants for test data will be the cost of shipping the drive(s) back to LDC. Please be sure to use a shipper who allows you to track the drive until it reaches LDC and your responsibility ends. More information about the data will be provided on the TRECVID website starting in March as we know more.

3.3 Ancillary data associated with the test data

Provided with the broadcast news test data (*.mpg) on the loaner drive will be a number of other datasets.

Output of an automatic speech recognition (ASR) system

The automatic speech recognition output we expect to provided will be the output of an off-the-shelf product but probably with no tuning to the TRECVID data. LDC will run provide the ASR output. Any mention of commercial products is for information only; it does not imply recommendation or endorsement by NIST.

Output of a machine translation system (X->English)

The machine translation output we expect to provide will be the output of an off-the-shelf product but probably with no tuning to the TRECVID data. BBN will provide the MT (Chinese/Arabic -> English) output in April.We will probably distribute this material by download if it does not arrive in time to be included on the hardrives. Any mention of commercial products is for information only; it does not imply recommendation or endorsement by NIST.

Common shot boundary reference and keyframes:

Christian Petersohn at the Fraunhofer (Heinrich Hertz) Institute in Berlin will once again provided the master shot reference. Please use the following reference in your papers:

C. Petersohn. "Fraunhofer HHI at TRECVID 2004: Shot Boundary Detection System", TREC Video Retrieval Evaluation Online Proceedings, TRECVID, 2004 URL: www-nlpir.nist.gov/projects/tvpubs/tvpapers04/fraunhofer.pdfThe Dublin City University team will again format the reference and creating a common set of keyframes. Our thanks to both. The following paragraphs describe the method used in 2005 and to be repeated with the data for 2006.

To create the master list of shots, the video was segmented. The results of this pass are called subshots. Because the master shot reference is designed for use in manual assessment, a second pass over the segmentation was made to create the master shots of at least 2 seconds in length. These master shots are the ones to be used in submitting results for the feature and search tasks. In the second pass, starting at the beginning of each file, the subshots were aggregated, if necessary, until the currrent shot was at least 2 seconds in duration, at which point the aggregation began anew with the next subshot.

The keyframes were selected by going to the middle frame of the shot boundary, then parsing left and right of that frame to locate the nearest I-Frame. This then became the keyframe and was extracted. Keyframes have been provided at both the subshot (NRKF) and master shot RKF) levels.

In a small number of cases (all of them subshots) there was no I-Frame within the subshot boundaries. When this occured the middle frame was selected. (one anomally, at the end of the first video in the test collection, a subshot occurs outside a master shot.)

The emphasis in the common shot boundary reference will be on the shots, not the transitions. The shots are contiguous. There are no gaps between them. They do not overlap. The media time format is based on the Gregorian day time (ISO 8601) norm. Fractions are defined by counting pre-specified fractions of a second. In our case, the frame rate will likely be 29.97. One fraction of a second is thus specified as "PT1001N30000F".

The video id has the format of "XXX" and shot id "shotXXX_YYY". The "XXX" is the sequence number of video onto which the video file name is mapped, this will be listed in the "collection.xml" file. The "YYY" is the sequence number of the shot. Keyframes are identified as by a suffix "_RKF" for the main keyframe (one per shot) or "_NKRF" for additional keyframes derived from subshots that were merged so that shots have a minimum duration of 2 seconds.

The common shot boundary directory

will contain these file(type)s:- shots2006 - a directory with one file of shot information for each video file in the development/test collection

- xxx.mp7.xml - master shot list for video with id "xxx" in collection.xml

- collection.xml - a list of the files in the collection

- README - info on the segmentation

- time.elements - info on the meaning/format of the MPEG-7 MediaTimePoint and MediaDuration elements

3.5 Restrictions on use of development and test data

Each participating group is responsible for adhering to the letter and spirit of these rules, the intent of which is to make the TRECVID evaluation realistic, fair and maximally informative about system effectiveness as opposed to other confounding effects on performance. Submissions, which in the judgment of the coordinators and NIST do not comply, will not be accepted.

Test data

The test data shipped by LDC cannot be used for system development and system developers should have no knowledge of it until after they have submitted their results for evaluation to NIST. Depending on the size of the team and tasks undertaken, this may mean isolating certain team members from certain information or operations, freezing system development early, etc.

Participants may use donated feature extraction output from the test collection but incorporation of such features should be automatic so that system development is not affected by knowledge of the extracted features. Anyone doing searches must be isolated from knowledge of that output.

Participants cannot use the knowledge that the test collection comes from news video recorded during a known time period in the development of their systems. This would be unrealistic.

Development data

The development data is intended for the participants' use in developing their systems. It is up to the participants how the development data is used, e.g., divided into training and validation data, etc.

Other data sets created by LDC for earlier evaluations and derived from the same original videos as the test data cannot be used in developing systems for TRECVID 2006.

If participants use the output of an ASR/MT system, they must submit at least one run using the English ASR/MT provided by NIST. They are free to use the output of other ASR/MT systems in additional runs.

Participants may use other development resources not excluded in these guidelines. Such resources should be reported at the workshop. Note that use of other resources will change the submission's status with respect to system development type, which is described next.

- A - system trained only on common TRECVID development collection

data, the common annotation of such data, and any truth data created

at NIST for earlier topics and test data, which is publicly

available. For example, common annotation of 2003 and 2005 training data and

NIST's manually created truth data for 2003, 2004, and 2005 could in theory

be used to train type A systems in 2006. Allowed training data for

type A systems does NOT include the MediaMill baseline.

Since by design we have multiple annotators for most of the common training data features but it is not at all clear how best to combine those sources of evidence, it seems advisable to allow groups using the common annotation to choose a subset and still qualify as using type A training. This may be equivalent to adding new negative judgments. However, no new positive judgments can be added.

- B - system trained only on common development collection but not on (just) common annotation of it

- C - system is not of type A or B

3.6 Data license agreements for active participants

In order to be eligible to receive the test data, you must have have

applied for participation in TRECVID, be acknowledged as an active

participant, have completed the relevant permission forms (from the

active participant's area) and faxed them (Attention: Lori Buckland)

to  in the US. Include a cover sheet with your fax that

identifies you, your organization, your email address, and the fact

that you are requesting the TRECVID 2005 and/or 2006 data.

in the US. Include a cover sheet with your fax that

identifies you, your organization, your email address, and the fact

that you are requesting the TRECVID 2005 and/or 2006 data.

4. Topics:

4.1 Example types of video needs

I'm interested in video material containing:- a specific person

- one or more instances of a category of people

- a specific thing

- one or more instances of a category of things

- a specific event/activity

- one or more instances of a category of events/activities

- a specific location

- one or more instances of a category of locations

- combinations of the above

Topics may target commercials as well as news content.

4.2 Topics:

The topics, formatted multimedia statements of information need, will be developed by NIST who will control their distribution. The topics will express the need for video concerning people, things, events, locations, etc. and combinations of the former. Candidate topics (text only) will be created at NIST by examining a large subset of the test collection videos without reference to the audio, looking for candidate topic targets. Note: Following the VACE III goals, topics asking for video of events will be much more frequent this year - exploring the limits of one-keyframe-per-shot approaches for this kind of topic and encouraging exploration beyond those limits. Accepted topics will be enhanced with non-textual examples from the Web if possible and from the development data if need be. The goal is to create 24 topics.

* Note: The identification of any commercial product or trade name does not imply endorsement or recommendation by the National Institute of Standards and Technology

- Topics describe the information need. They are input to systems and guide to humans assessing relevance of system output

- Topics are multimedia objects - subject to the nature of the need and the questioner's choice of expression

- As realistic in intent and expression as possible

- Template for topic:

- Title

- Brief textual description of the information need (this text may contain references to the examples)

- Examples* of what is wanted:

- reference to video clip

- Optional brief textual clarification of the example's relation to the need

- reference to image

- Optional brief textual clarification of the example's relation to the need

- reference to audio

- Optional brief textual clarification of the example's relation to the need

5. Submissions and Evaluations:

Please note: Only submissions which are valid when checked against the supplied DTDs will be accepted. You must check your submission before submitting it. NIST reserves the right to reject any submission which does not parse correctly against the provided DTD(s). Various checkers exist, e.g., , Xerces-J,, etc.

The results of the evaluation will be made available to attendees at the TRECVID workshop and will be published in the final proceedings and/or on the TRECVID website within six months after the workshop. All submissions will likewise be available to interested researchers via the TRECVID website within six months of the workshop.

5.1 Shot boundary detection

- Participating groups may submit up to 10 runs. Please note required attributes to accommodate complexity information (total decide time (secs), total segmentation time(secs), and processor type) for each run are part of the submission DTD. All runs will be evaluated.

- Here is the DTD for the shot boundary detection results of one run, one for the enclosing shotBoundaryResults element and a small, partial example. Please place the output of each run in a separate file. You can tar/zip the (up to 10) run files together for submission. Remember that each run much test one variant of your system against all the test files. Please check your submission to see that it is well-formed

- Please send your submissions (up to 10 runs) in an email to over at nist.gov. Indicate somewhere (e.g., in the subject line) which group you are attached to so that we match you up with the active participant's database.

- Automatic comparison to human-annotated reference. Software for this comparison is available under Tools used by TRECVID from the main TRECVID page.

- Measures:

- All transitions: for each file, precision and recall for detection; for each run, the mean precision and recall per reference transition across all files

- Gradual transitions only: "frame-recall" and "frame precision" will be calculated for each detected gradual reference transition. Averages per detected gradual reference transition will be calculated for each file and for each submitted run. Details are available.

5.2 High-level feature extraction

Submissions

- Each team may submit up to 6 runs for pooling. All runs must be prioritized and all will be evaluated.

- For each feature in a run, participants will return at most 2000.

- Here is a DTD for feature extraction results of one run, one for results from multiple runs, and a small example of what a site would send to NIST for evaluation. Please check your submission to see that it is well-formed

- Please send your submission in an email to NIST (overATnistDOTgov). Indicate somewhere (e.g., in the subject line) which group you are attached to so that we match you up with the active participant's database. Send all of your runs as one file or send each run as a file but please do not break up your submission any more than that. Each run must contain results for all features listed above

Evaluation

- The unit of testing and performance assessment will be the video shot as defined by the track's common shot boundary reference. The submitted ranked shot lists for the detection of each feature will be judged manually as follows. We will take all shots down to some fixed depth (in ranked order) from the submissions for a given feature - using some fixed number of runs from each group in priority sequence up to the median of the number of runs submitted by any group. We will then merge the resulting lists and create a list of unique shots. These will be judged manually down to some depth to be determined by NIST based on available assessor time and number of correct shots found. NIST will maxmize the number of shots judged within practical limits. We will then evaluate each submission to its full depth based on the results of assessing the merged subsets. This process will be repeated for each feature.

- If the feature is perceivable by the assessor for some frame (sequence) however short or long then, then we'll assess it as true; otherwise false. We'll rely on the complex thresholds built into the human perceptual systems. Search and feature extraction applications are likely - ultimately - to face the complex judgment of a human with whatever variability is inherent in that.

- Runs will be compared using precision and recall. Precision-recall curves will be used as well as a new estimate for average precision, which combines precision and recall: inferred average precision (infAP).

5.3 Search

Submissions

-

The maximum number of search runs each participating group may submit

is 6. All runs must be prioritized and all will be evaluated.

Each interactive run will contain one result for each and every topic using the system variant for that run. Each result for a topic can come from only one searcher, but the same searcher does not need to be used for all topics in a run. If a site has more than one searcher's result for a given topic and system variant, it will be up to the site to determine which searcher's result is included in the submitted result. NIST will try to make provision for the evaluation of supplemental results, i.e., ones NOT chosen for the submission described above. Details on this will be available by the time the topics are released.

- For each topic in a run, participants will return the list of at most 1000 shots. Here is a DTD for search results of one run, one for results from multiple runs, and a small example of what a site would send to NIST for evaluation. Please check your submission to see that it is well-formed

- Please send your submission in an email to over at nist.gov. Indicate somewhere (e.g., in the subject line) which group you are attached to so that we match you up with the active participant's database. Send all of your runs as one file or send each run as a file but please do not break up your submission any more than that. Remember, a run will contain results for all of the topics.

Evaluation

- The unit of testing and performance assessment will be the video shot as defined by the track's common shot boundary reference. The submitted ranked lists of shots found relevant to a given topic will be judged manually as follows. We will take all shots down to some fixed depth (in ranked order) from the submissions for a given topic - using some fixed number of runs from each group in priority sequence up to the median of the number of runs submitted by any group. We will then merge the resulting lists and create a list of unique shots. These will be judged manually to some depth to be determined by NIST based on available assessor time and number of correct shots found. NIST will maximize the number of shots judged within practical limits. We will then evaluate each submission to its full depth based on the results of assessing the merged subsets. This process will be repeated for each topic.

- Per-search measures:

- average precision (definition below)

- elapsed time (for all runs)

- Per-run measure:

-

mean average precision (MAP):

Non-interpolated average precision, corresponds to the area under an ideal (non-interpolated) recall/precision curve. To compute this average, a precision average for each topic is first calculated. This is done by computing the precision after every retrieved relevant shot and then averaging these precisions over the total number of retrieved relevant/correct shots in the collection for that topic/feature or the maximum allowed result set (whichever is smaller). Average precision favors highly ranked relevant documents. It allows comparison of different size result sets. Submitting the maximum number of items per result set can never lower the average precision for that submission. The topic averages are combined (averaged) across all topics in the appropriate set to create the non-interpolated mean average precision (MAP) for that set. (See the TREC-10 Proceedings appendix on common evaluation measures for more information.)

5.5 Rushes exploitation

This is an exploratory task in 2005 and participants will perform their own evaluations. There will be no submissions. Results should be presented in the notebook paper and in a demonstration, poster, or possibly a talk at the workshop.

6. Milestones:

The following are the target dates for 2006.

- 1. Feb

- NIST sends out Call for Participation in TRECVID 2006

- 20. Feb

- Applications for participation in TRECVID 2006 due at NIST

- 1 Mar

- Final versions of TRECVID 2005 papers due at NIST

- 15. Mar

- LDC begins shipping 2005 data to new participants for use in training

- 1. Apr

- BBC rushes proposal complete

Guidelines complete - 18. Apr

- LDC begins shipping hard drives with 2006 data to all participants

- 25. Apr

- ASR/MT output for feature/search test data available for download

- 14. Jul

- Shot boundary test collection DVDs shipped by NIST

- 11. Aug

- Search topics available from TRECVID website.

- 15. Aug

- Shot boundary detection submissions due at NIST for evaluation.

- 21. Aug

- Feature extraction tasks submissions due at NIST for evaluation.

Feature extraction donations due at NIST - 25. Aug

- Feature extraction donations available for active participants

- 25 Aug

- Results of shot boundary evaluations returned to participants

- 29. Aug - 13. Oct

- Search and feature assessment at NIST

- 19. Sep

- Results of feature extraction evaluations returned to participants

- 15. Sep

- Search task submissions due at NIST for evaluation

- 18. Oct

- Results of search evaluations returned to participants

- 23. Oct

- Speaker proposals due at NIST

- 29. Oct

- Notebook papers due at NIST

- 6. Nov

- Workshop registration closes

- 8. Nov

- Copyright forms due back at NIST (see Notebook papers for instructions)

- 13,14 Nov

- TRECVID Workshop at NIST in Gaithersburg, MD(Registration, agenda, etc)

- 30. Nov

- Workshop papers publicly available (slides added as they arrive)

- 1. Mar 2007

- Final versions of TRECVID 2006 papers due at NIST

7. Outstanding 2006 guideline work items

Here is a list of work items that must be completed before the guidelines are considered to be final and the responsible parties.

- Rushes task defined/documented with real world correlate(s), system task with inputs and outputs, training and test data, evaluation procedure including measurement(s), and schedule. ANSWER: see above [Coordinators and interested participants]

- Source(s), formats, and delivery scheme for ASR and MT system output on news test data. ANSWER: see above [Coordinators]

- Methods for assuring comparability of interactive search results within a site despite human in the loop. ANSWER: This will be an on-going discussion among those working on interactive systems. [Coordinators and interactive search participants]

- Maximum number of runs per site defined for feature and search tasks. ANSWER: 6 per team for the search task and 6 per team for the high-level feature task. [Coordinators]

8. Results and submissions

For future use ...

9. Information for active participants

10 Contacts:

- Coordinators:

- and

- NIST contact:

- Email lists:

- Information and discussion for active workshop participants

- [email protected]

-

NIST will subscribe the contact listed in your application to

participate when we have received it. Additional members of active

participant teams will be subscribed by NIST if they send email to

indicating they want to be subscribed, the

email address to use, their name, and providing the TRECVID 2005

active participant's password. Groups may combine the information

for multiple team members in one email.

Once subscribed, you can post to this list by sending you thoughts as email to [email protected], where they will be sent out to everyone subscribed to the list, i.e., the other active participants.

- General (annual) announcements about TRECVID (no discussion)

- [email protected]

- If you would like to subscribe, logon using the logon to which you would like trecvid email to be reflected. Send email to [email protected] and ask her to subscribe you to trecvid. This list is used to notify interested parties about the call for participation and broader issues. Postings will be infrequent.

Last

updated:

Last

updated: Date created: Monday, 24-Jan-06

For further information contact